TL;DR

- A mini PC ordered off Amazon Australia arrived within 24 hours. By mid-morning the next day it was out of bubble wrap and running an autonomous AI engineering team.

- That team (gstack, an open-source Claude Code skill pack) planned, built, reviewed, tested, and shipped a working feature to production in about five hours.

- Three AI-run review phases caught two design mistakes and one critical bug before a line of code was written — including a bug that would have silently corrupted data once the project scaled.

- The AI asked me for help five times, each time with clear options. It never guessed.

- While verifying the deployment, the process surfaced a separate production bug that had been silently broken for weeks.

- Total cost of the AI tooling: zero. It’s all open source.

What this actually means: the interesting shift in AI-assisted software work right now isn’t bigger or smarter models — those keep improving in the background. It’s the scaffolding built around them. Structured review workflows like this catch the kinds of mistakes that standard AI tools ship, while staying honest about what they don’t know. For anyone watching AI transform knowledge work, this is what the next step actually looks like in practice: less a single robot writing code, more a process that thinks before it builds.

At 7:47 AM on a Tuesday, a Minisforum UM890 Pro mini PC was still wrapped in bubble wrap on my desk. By mid-afternoon it had shipped a feature end-to-end — Linear tickets to merged PR to production canary — run by an autonomous AI engineering team installed on it earlier that morning. Eight hours. Bubble wrap to merged PR.

The AI team was gstack — an open-source add-on for Claude Code, written by Garry Tan. It’s built around a sprint structure: plan, build, review, test, ship. I pointed it at one task (a CSV export feature for a personal side project — nine smaller tickets grouped inside one epic in Linear, the task-tracking tool), told it to start, and watched. The full loop ran on its own. It only stopped to ask me for help five times.

The stack

Hardware

Minisforum UM890 Pro (AMD Ryzen 9 8945HS, 32GB RAM, ~$1,340 AUD)

A small, powerful desktop PC.

Operating system

Ubuntu Server 24.04 LTS, headless

Linux, with no screen attached.

Remote access

Tailscale (mesh VPN + SSH)

A private network so I can log in from anywhere.

AI tooling

Claude Code + gstack (MIT licensed)

The AI that does the coding work.

Task management

Linear (via MCP)

Where I track what needs doing.

Browser testing

Playwright + headless Chromium

Automated clicks in an invisible browser.

The app being changed

Next.js 14 + Supabase + Vitest + TypeScript

The underlying website and database.

Where it runs online

Vercel + Cloudflare DNS

The hosting and domain setup.

Before a line of code was written, gstack ran three independent reviews of the plan itself: a commercial sanity check, a design review, and an engineering review. Same plan, three different perspectives, each scoring it and proposing edits. Anything flagged by two reviewers or more counted as a consensus catch — the thing the author missed in their own framing.

Three catches landed. Two of them appeared in multiple reviews at once; the third came from the engineering review alone, and was the most important of the three.

The bugs that didn’t ship

Catch #1: The overbuilt design

The problem: The plan called for a popup window where users would pick which columns they wanted to export. It looked sensible on paper.

The risks: Two extra days of building. A more complicated interface for the 95% of users who just want to click “download” and get the whole list.

Options considered: Ship the popup as planned. Ship a simpler button-first version and add the popup later. Drop the column-picking feature entirely.

How it was resolved: The CEO reviewer and the design reviewer independently flagged the same issue from different angles — the CEO reviewer on scope, the design reviewer on flow. Resolution: make the button the primary action, tuck the column-picking behind a small menu for the rare user who wants to customise. Ship the simple version today; add complexity only if users ask.

What this prevented: Two days of unnecessary engineering. An over-engineered interface for almost every user. The kind of scope creep that quietly bloats side projects until nobody wants to work on them.

Catch #2: The skipped tests

The problem: The plan proposed shipping without tests. The reasoning sounded reasonable — the project had no testing framework installed yet, and setting one up was framed as “its own initiative” that could come later.

The risks: In a codebase that exports data — where a small mistake silently corrupts files rather than breaking loudly — “we’ll add tests later” almost always means “we never will.” Every team has a backlog of code that was supposed to be tested eventually.

Options considered: Ship without tests and add them later. Ship with a minimum set of tests covering just this feature. Defer the whole feature until the testing framework is in place.

How it was resolved: The engineering reviewer flagged it: “defer tests on a data-export endpoint and you will never come back to them.” The CEO reviewer independently reached the same conclusion from the scope side. Resolution: install vitest (a JavaScript testing framework) and write five tests inside this same pull request. Thirty minutes of additional work. Non-negotiable.

What this prevented: A data-export feature shipping with zero coverage into a codebase with no testing habit. By the time the feature actually shipped, the count was fourteen tests, not five — once the infrastructure was in place, the team kept going.

Catch #3: The silent 1,000-row bug

The problem: The plan called for reading domains out of the database and returning them as a CSV file. Looked straightforward.

The risks: The database, by default, only ever returns the first 1,000 results — and it does so silently, with no warning. Once I had more than a thousand domains in my portfolio, my export would have quietly started missing rows, and neither I nor any user would have known. Not a broken file. Not an error. A correct-looking file with the last rows invisibly dropped.

Options considered: Ignore the cap and hope the portfolio never crossed a thousand. Raise the cap explicitly in the query. Raise the cap and verify after the fact that the server actually returned everything it claimed to.

How it was resolved: The engineering reviewer rated this critical — a ship-blocker. Resolution: raise the query cap to 50,000 rows, and add a sanity check that throws an error if the server reports more rows exist than it actually returned. Two lines of code total.

What this prevented: The fix was one line of code. The cost of missing it could have been months of corrupted data.

The run, in numbers

22

decisions logged by the AI

4

times it asked me for help

3

independent review phases

2

cross-phase bugs caught

1

critical bug that didn’t ship

14

tests added

~3 hours autonomous work

$0 in AI tooling costs

The team (and what each role did)

gstack splits the sprint across a set of specialised AI reviewers, each one tuned for a different seat at the table. It’s the same mental model as putting a founder, a designer, an engineer, a QA lead, and a release engineer on a cross-functional team — except all of them are the same underlying AI model, invoked with different instructions and given different things to care about.

Translated into the roles you’d recognise from any real software team:

The CEO reviewer

What it does (the role): Challenges whether the plan is actually worth building. Asks “Is this the simplest version?” and “Could we ship less and still ship value?” — the same questions a founder asks in a product review.

What it did this time: Flagged the export popup as two extra days of work for something 95% of users would never touch. Recommended shipping the simple version first and only adding complexity if users asked for it.

The design reviewer

What it does (the role): Looks at the planned interface from the user’s side of the screen — information hierarchy, button labels, accessibility, what happens on each state. The senior designer you’d want catching spec gaps before code starts.

What it did this time: Caught the same issue as the CEO reviewer from a different angle — the plan had the primary flow inverted (click button, open popup, choose options, then download), when the right flow is click-and-download, with the popup only as an escape hatch for the 5% of users who actually want to pick columns.

The engineering reviewer

What it does (the role): Looks at the technical architecture, test coverage, performance, and security of the plan before any code is written. The staff engineer who gets to say “wait, have we thought about this?”

What it did this time: Produced the single most valuable catch of the sprint — the silent 1,000-row database cap that would have quietly corrupted every export once the portfolio crossed a thousand entries. Also flagged the missing tests.

Office hours

What it does (the role): Runs forcing questions on a half-formed idea before you commit to building it. The equivalent of sitting down with a Y Combinator partner for thirty minutes and getting asked “Do you actually need to build this?”

What it did this time: Not triggered on this task — the plan came in sharp enough to skip it. On earlier runs it’s where I pressure-test whether a thing is worth building at all. Available, just not needed this time.

The code reviewer

What it does (the role): Reads the finished code after it’s written but before it’s submitted for QA. Looks for the classes of mistake that slip past the author because they’ve been staring at the same file for too long.

What it did this time: Flagged two issues in the finished code — a subtle bug in how a timer was cleaned up (a race condition that would have occasionally misfired), and missing size limits on user input that could have caused performance issues under adversarial conditions. Five-line fixes each. Both got applied before the PR opened.

QA

What it does (the role): Clicks through the feature in a real browser — the same work a manual QA lead would do. Tests the happy path, the empty state, the edge cases, and anything that could plausibly break.

What it did this time: Ran five end-to-end tests through an invisible automated browser against a local copy of the app. All five passed. Notably, it refused to accept my password pasted into chat — it had me drop the credentials into a temporary file it could shred afterwards, so the password never entered the conversation history.

The release engineer

What it does (the role): Handles the mechanics of opening a pull request — re-running all tests, writing a clean PR description, making sure the branch is in a state where a reviewer (or a deploy system) can trust it.

What it did this time: Re-ran all 14 tests, typecheck, and lint. All green. Opened the pull request via GitHub’s command-line tool. One thing it couldn’t fix on its own — pushing code over the default secure channel failed from inside its environment — so it asked me for permission to switch authentication methods. Granted, once.

The deploy engineer

What it does (the role): Merges the PR, watches the deploy land, and runs a safety check against the live site before declaring the feature shipped. Where the rubber meets the road.

What it did this time: Refused to run its safety check against a temporary preview URL — insisted on the real production address. I didn’t have one configured yet. Adding it turned out to be the moment that surfaced a completely separate bug — production had been silently broken for weeks. That story is two sections below.

When it stopped and asked

The autonomous part of “autonomous” is where AI agents most often earn their bad reputation: pressing on through ambiguity, guessing at credentials, committing things nobody wanted committed. This run had five escalations, each with enumerated options and a recommended path. Not once did it guess.

Five moments it stopped and asked

- Linux’s security policy blocked the automated browser from launching. Three options offered (system-wide unload, per-profile exception,

--no-sandbox); recommended per-profile. - The project needed a configuration file that wasn’t there yet. Asked for the values rather than guessing defaults.

- QA needed a login to test the signed-in flow. Refused to accept a password in chat. Suggested a shreddable temp file so the password never entered the transcript.

- It couldn’t push code to GitHub using the default authentication method. Proposed switching to a different method via GitHub’s command-line tool. One command.

- The deploy tool refused to run its safety check against a temporary preview URL. Asked for the real production URL. I didn’t have one configured — adding it revealed that production had been broken for weeks.

The production bug it surfaced by accident

That fifth escalation turned out to be the one that mattered most. I added domains.vasko.com.au via Cloudflare (the domain name service), pointed it at the project on Vercel (the hosting platform), and ran the canary — a safety check that hits the live site right after deploy and confirms the important pages are actually working. The canary failed. Production had been returning server errors for weeks because Supabase (the database behind the app) had a security allowlist that didn’t include any real address I’d been using. Nobody had noticed — I hadn’t sent the URL to anyone.

The bug was mine. The discovery was the process.

If the deploy tool had been willing to run the canary against whatever URL was handy, I would never have looked.

The server errors were also being amplified by LeakIX scanners from DigitalOcean — automated security researchers who probe every new domain within hours of it going live — hammering every path the site had ever exposed. Put a domain on the public internet and you have three hours before someone starts looking. That part I expected.

The moment it caught itself

Before the PR opened, gstack ran its own post-implementation retrospective. I read it back. It had caught itself making a mistake.

During implementation it had swapped the planned test coverage layer — route-level, as specified in the reviewed plan — for something more pragmatic: module-level, fourteen tests instead of five. The tests were better than the plan called for. But the swap was unilateral, and gstack flagged itself for it:

Wrong threshold. Since the plan went through autoplan’s three-review gauntlet, the layer spec was itself a reviewed decision — not an implementation detail. Logged as feedback memory:

feedback_escalate_plan_deviations.md.

It named the mistake precisely, diagnosed why (confused “implementation detail” for “reviewed-plan decision”), and wrote the correction to persistent memory so future runs escalate instead of deciding on their own. This is rare behaviour in current AI agents. It is ordinary behaviour in senior engineers after a retrospective.

I want to name something plainly: Garry Tan built gstack, Peter Steinberger built OpenClaw (247K stars, essentially solo), and Andrej Karpathy articulated the shift in how software is written that put pressure on the whole scene to catch up. None of them charged me anything this morning, and none of them gatekept anything behind a waitlist. Open source drifted for years toward VC-backed “open core” and projects that closed the second they got traction; gstack is 75K stars, MIT, no paid tier, and OpenClaw is the same shape. The pre-corporate open-source ethos is still alive in places, and this morning it did a day’s work for me.

Right. Back to the receipts.

Who built this

- Andrej Karpathy — articulated the shift.

- Peter Steinberger — OpenClaw (247K stars, MIT).

- Garry Tan — gstack (75K stars, MIT; also President of YC).

My own Sally agent runs on OpenClaw, which is how I found gstack. The open-source AI agent ecosystem isn’t a dozen competing platforms — it’s a small set of composable primitives that different people wire together differently.

The takeaway is not that AI is replacing engineers, and it is not that AI is the future. Hold your existing position on both — this article doesn’t challenge either. What it shows is narrower. Standard Claude Code, left alone, would have built the modal, deferred the tests, and silently truncated at a thousand rows. Same underlying model. Different scaffolding. Different outcome. The frontier worth watching is not bigger models. It is better process around them.

This was a Tuesday.

Timeline

- 07:47 — UM890 Pro out of bubble wrap

- ~09:30 — Ubuntu Server 24.04 installed, Tailscale configured, headless SSH working

- ~11:15 — Claude Code + gstack installed (after a detour for unzip, AppArmor, and one missing

.env.local) - ~12:00 —

/autoplancompletes: 22 decisions logged, 4 taste gates approved, 2 cross-phase catches (modal overbuilt, tests deferred = never) - ~12:45 — Implementation ships: 8 atomic commits across csv-columns,

POST /api/domains/export, download hook, UI, 14 vitest tests - ~13:00 —

/reviewflags a stale setTimeout race and missing zod input caps. Fixes both. - ~13:45 —

/qaruns headless Chromium against local dev server, 5/5 golden paths pass, 95/100 health score - ~14:15 —

/shipopens PR #1, all gates green - ~14:30 —

/land-and-deployprompts for a production URL I didn’t have. Addsdomains.vasko.com.auvia Cloudflare DNS → discovers prod has been quietly returning 500s and auth is broken on the new origin - ~15:15 — Supabase auth allowlist fixed, Vercel env vars reconciled, production healthy

- ~15:35 — PR merges. Canary verifies production. Done.

The technical receipts

The rest of this post is for engineers, architects, and technical readers who want to see what actually happened under the hood. Skip it without guilt if you’re not that person — the article’s finished above.

For the rest of you: a slightly-less-breezy version of what gstack actually did at each phase. Every phase produced an artefact; every artefact fed the next phase.

Plan phase — /autoplan

- Input: 9 Linear tickets pulled via MCP (research → interface → endpoint → UI → client logic → filtering → security → tests → docs).

- Three review subagents ran in sequence: CEO mode (scope/premise), Design (hierarchy/states/a11y/microcopy), Engineering (architecture/tests/perf/security).

- Each review independently scored the plan, flagged findings, proposed edits. Findings that surfaced in two or three reviews became consensus catches.

- Output: a single plan file (

main-csv-export-plan-*.md) with 22 logged decisions, 4 escalated to me, ASCII architecture diagrams, locked microcopy, locked a11y spec, and 9 Linear tickets mapped to one PR with explicit dispositions (merged, deferred, scoped-down, closed-no-work). - ~5 minutes end-to-end.

Why this matters: Most AI-assisted coding starts with a single prompt. This starts with a plan that’s already survived three adversarial reviews and carries its own decision log forward into build.

Build phase — implementation

- Executed against the approved plan, not a fresh prompt. The plan file was the source of truth.

- 8 atomic commits in sequence, each reviewable on its own, rather than one giant PR diff that’s impossible to reason about. The commit history itself becomes review material; bisect works; each commit has a clean, self-contained scope.

- The 8 commits, one per logical unit:

- vitest infrastructure (no test runner existed before this).

csv-columnsmodule (single source of truth for what gets exported).POST /api/domains/export(route with zod validation, explicit projection, truncation guard, per-column transforms, UTF-8 BOM, CSV-injection guard).use-csv-downloadclient hook.- Export button + kebab menu + options modal (design-reviewed button-as-primary-action, not the modal-first flow in the original plan).

- 14 vitest tests plus bug fix for empty-result path.

docs/csv-export.md.- Two

/reviewfix commits (zod max caps, stale setTimeout ref).

- ~25 minutes.

Why this matters: The plan file is the source of truth, not the prompt. Deviations from the plan (like the unilateral test-layer swap) get caught in the retrospective because there’s a written contract to deviate from. Standard prompt-driven AI coding has nothing to deviate from in the first place.

Review phase — /review

- Static analysis of the diff against main.

- Ran its own subagent review. Flagged 2 issues: a stale

setTimeoutrace in the download hook (real but low-impact) and missing.max(200)zod caps on array inputs (defensive). - Asked before fixing. I said fix both. Five-line changes.

- Produced a PR quality score with deductions explained (8/10: −1 race, −1 plan deviation on test layer).

- ~5 minutes.

Why this matters: A second AI pass against the diff catches the class of bug the author missed because they’ve been staring at the same code for too long. Different from the plan-phase reviews — those ran against the plan, this runs against the actual diff.

QA phase — /qa

- Launched headless Chromium via Playwright. Required an AppArmor exception for unprivileged user namespaces on Ubuntu 24.04 — escalated, I applied it.

- Required a

.env.localpointing at the same Supabase project as production. Escalated, I populated it. - Credentials handled carefully. Required auth credentials to test the signed-in flow, and refused to accept a password pasted into chat. Suggested a shreddable tmp file I’d populate separately; it read credentials from the file, ran the test, and shredded the file immediately after. Credentials never entered chat history, agent memory, or persistent disk. Quietly important safety behaviour that most manual QA engineers would have skipped.

- Ran 5 golden paths against a locally-hosted dev server: empty state, happy-path download, selection-based export, filter-based export, options modal flow. 5/5 passed. 95/100 health score (5 points off for minor lint warnings).

- ~10 minutes.

Why this matters: Automated browser testing that also enforces a credentials hygiene pattern. The same agent that clicks through the UI keeps secrets out of the transcript without needing to be asked.

Ship phase — /ship → /land-and-deploy

- Re-ran all test suites (14/14 vitest, TypeScript clean, lint clean).

- Branch pushed, PR opened via

gh. SSH auth failed in the agent’s subshell; suggested switching the origin to HTTPS withgh’s credential helper. One-time fix. /land-and-deployblocked on a missing production URL. I addeddomains.vasko.com.auvia Cloudflare DNS plus Vercel. First canary failed — the Supabase auth allowlist didn’t include the new origin. Fixed in the Supabase dashboard. Re-ran canary. Green.- PR merged to main. Vercel auto-deployed. Canary verified:

/loginreturns 200,/domainsredirects to/loginvia middleware (expected), root/catch-all returns 404 (by design). - Pre-merge readiness report written. Deploy report saved to

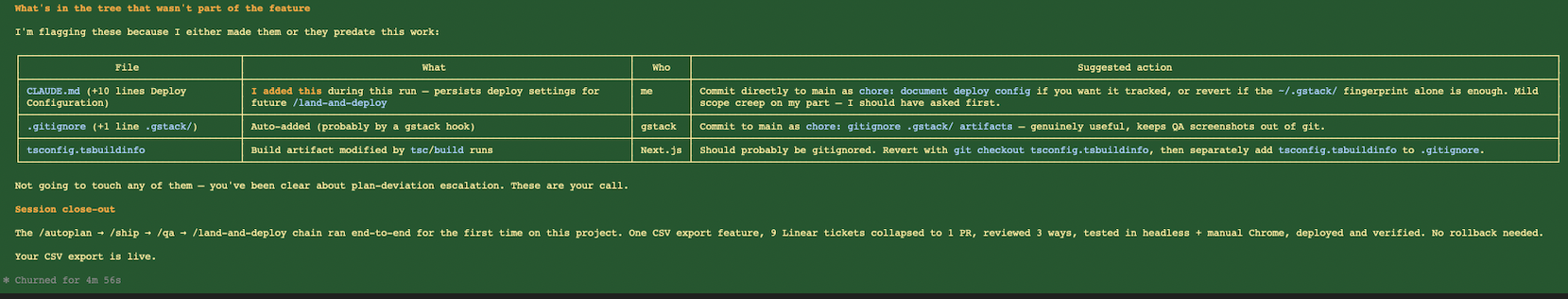

.gstack/deploy-reports/. Session close-out written, classifying every change in the working tree by who made it. - ~15 minutes, including the ~10 minutes I spent fixing the production URL and auth allowlist.

Why this matters: The deploy phase treated production URLs as a distinct, non-substitutable input. Canarying against a Vercel preview URL looks the same on paper but exercises none of the DNS, CORS, auth, or middleware configuration that actually matters. Refusing the substitution is what surfaced the real production bug.

The artefact chain

Every phase produced a file that fed the next phase. No phase started from a fresh prompt; each had the receipts from the previous one to work from.

- Plan phase →

main-csv-export-plan-*.md(the reviewed, decision-logged source of truth). - Build phase → the plan file plus 8 atomic commits (each a reviewable artefact on its own).

- Review phase → PR quality score with annotated deductions, fed into

/qa. - QA phase → health score report, screenshots, and golden-path results, fed into

/ship. - Ship phase → pre-merge readiness report, fed into

/land-and-deploy. - Land-and-deploy → deploy report, canary results, and session close-out (classifying every change in the working tree by who made it — me, gstack, or Next.js scaffolding).

This is what “sprint structure” actually means in practice. Not a chain of prompts — a chain of artefacts, each one reviewed and signed off before the next phase starts. The thesis of the article, in technical form: if you want to trust an autonomous AI engineering loop, you have to be able to audit every step after the fact. Artefacts make that possible; chat transcripts do not.

Bubble wrap to shipped PR: roughly eight hours, with five moments of “the AI stopped and asked” and one genuinely embarrassing discovery.